PDSH, SLE, and SLURM

Managing many systems from one is a basic need for system administrators. A need which has obvious development commonality judging from the myriad tools available that delegate work to other systems. Often the criteria used to target systems for management is understandably inflexible. Directory service management consoles and configuration management systems are good examples of these types of tools and criteria. However, the HPC administrator often needs to interact with varying enumerations of systems. These systems can present as predictable static groups, but in HPC dynamic system groupings are common. Static groups could be determined by criteria such as hardware, work queue, or geography and ephemeral criteria such as system state or running jobs composing the latter.

The few tools that satisfy this niche multi-system need have been around since UNIX administrators had to manage several systems concurrently. For clarity the functionality being described is the ability to issue a command on one system that is distributed to selected systems for execution locally. Distributed Shell (DSH) from IBM® serves as an example of such a tool developed for UNIX clusters. This writing focuses on the Parallel Distributed Shell (PDSH) utility for Linux, but later examples will demonstrate that even it borrows a bit from DSH.

Deploying and using PDSH for flexible real-time system management can be very simple. This article will not drill down into the referenced Linux application and security frameworks PDSH is leveraging. The focus will be on using PDSH on the SLE distribution and the administrative benefit of using the SLE PDSH implementation against SLURM clusters. However, the solutions and recommendations communicated here should work equally as well on other distributions.

Software packages

The pdsh package on SLE 12 and 15 is available in the High Performance Computing Module, enabled in YaST, and will install the following applications.

pdsh: Parallel remote shell program

/usr/bin/pdsh Can run multiple remote commands in parallel

/usr/bin/pdcp Can copy files to multiple remote hosts in parallel

/usr/bin/rpdcp Can copy files from multiple remote hosts in parallel

/usr/bin/dshbak Formats and sorts pdsh output for humans

~# zypper install pdsh

On SLE 12 and 15, the following remote command modules are included with PDSH by default:

SSH: Opens multiple instances of openSSH to execute tasks on remote hosts

EXEC: Executes an arbitrary command for each target host (usually over SSH)

MRSH: Uses the mrsh protocol and credential based auth to execute tasks on remote hosts

PDSH can be used to query which remote command modules are installed.

~# pdsh -L

The remote command module plugin files can be found in the “/usr/lib64/pdsh/” directory.

SSH module example

The default for PDSH on SLE is to use the SSH remote command module and will be the first configuration example. This module requires a working public key authentication configuration (non-interactive) be in place for the participating systems.

Implementing a public key authentication configuration is beyond the scope of this document.

Recommendations

When using SSH consider implementing a ~/.ssh/config file configured similar to the following example to suppress errors and some programmatic feedback for the user issuing commands. Often errors or program feedback interfere with PDSH operations or simply clutter output.

Host <HOSTNAME_1>

UserKnownHostsFile /dev/null

StrictHostKeyChecking no

LogLevel error

or

Host <HOSTNAME_1> <HOSTNAME_2>

UserKnownHostsFile /dev/null

StrictHostKeyChecking no

LogLevel error

or these defaults can be specified using environment settings at runtime:

~# PDSH_SSH_ARGS_APPEND=”-o StrictHostKeyChecking=no -o UserKnownHostsFile=/dev/null -o LogLevel=error” pdsh <COMMAND_LINE>

or by setting persistent user environment defaults:

~/.bashrc

export PDSH_SSH_ARGS_APPEND=”-o StrictHostKeyChecking=no -o UserKnownHostsFile=/dev/null -o LogLevel=error”

Consider the balance of usability and security when implementing such a configuration and design for it. The systems management realm and network space should be designed to minimize risk but provide optimal system ingress for the utility. Using a private network shared between administrative nodes and compute nodes for example.

Deployment

Once the pdsh package is deployed, assuming the public key authentication configurations are in place, you can begin using the utility.

On administration system(s):

- Install the pdsh package.

Systems can be targeted for administrative actions by specifying them individually as arguments or by building lists of systems in “machine” files to target them as groups. A machine file simply has the name of each managed system on a new line in a uniquely named file. Systems can be listed in multiple files.

/etc/pdsh/nodes_even : /etc/pdsh/nodes_odd:

node2.dvc.darkvixen.com node1.dvc.darkvixen.com

node4.dvc.darkvixen.com node3.dvc.darkvixen.com

node6.dvc.darkvixen.com node5.dvc.darkvixen.com

node8.dvc.darkvixen.com node7.dvc.darkvixen.com

Taking the new configuration for a walk

Since the default remote command module is SSH the command to target systems is fairly straight forward. The “-w” argument accepts hosts, files, and even regular expressions.

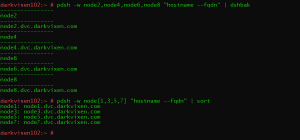

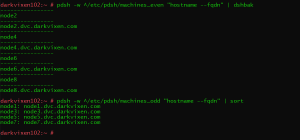

Figure 1: Syntax for using PDSH over SSH

Figure 2: Syntax for using PDSH over SSH

Note the differences in the command output when pdsh is piped to “dshbak” or “sort”.

EXEC module example

The deployment requirements and security recommendations for the EXEC module are the same as those of the SSH remote command module for this use case. It is demonstrated here solely to display a syntax example.

The same commands can be issued through SSH using the “EXEC” remote command module:

~# pdsh -R exec -w node[1-4] ssh -x -l %u %h hostname –fqdn

MRSH module example

PDSH can also use munge authentication to communicate with other systems to delegate work. The MRSH (Munge authenticated remote shell) protocol uses munge to encode credentials used by a local process which are transmitted to a remote process. The remote process uses munge to decode, or unmunge, those credentials and subsequently use them for authorisation to do something. Similar to the SSH module requirements this module requires a working munge key authentication configuration (non-interactive) be in place for the participating systems.

Implementing a munge key authentication configuration is beyond the scope of this document.

There are benefits to using the MRSH remote command module over the SSH module. For example, because standard error output (stderr) is incorporated into SSH responses, configurations that mitigate this are required. Additionally, SSH requires reserved sockets so in clusters with lots and lots of nodes port exhaustion conditions could be encountered when massively parallel work occurs. Conversely, the MRSH module is not impacted by these factors.

SLURM clusters rely on munge authentication so systems participating in these clusters are already prepared to use this module.

Recommendations

This example requires a closer look at the security model for the participating systems previously referenced. So far both examples presented utilised the SSH protocol for communication and authentication for the user issuing commands. In this case user root, but it could be another user with elevated privileges as well. The assumption is access to the SSH service is hardened on these systems according to well documented, and organisational, best practice conventions.

The systems in these examples use a basic security model typical of many HPC deployments. There is a perimeter firewall between the cluster head node(s) and the organisational and public networks, there is a local software firewall running on the head node, and no local firewalls running on the compute nodes. However, the head node(s) and compute nodes share a non-routable private network for inter-node communication. Typically this network can be, and usually already is, leveraged for munge communication. Extending it to the MRSH protocol as well seems a good fit.

As mentioned SSH uses well known reserved or customisable ports. Conversely MRSH forgoes the use of reserved ports for authentication as security. Meaning the ports being used cannot be predicted which is difficult to implement for most firewalls. Fortunately the firewalld implementation present on most modern Linux systems has features that can be used to implement such a configuration without throwing reasonable security conventions to the wind.

The cluster head node in this example is used as an administration system for the cluster so this configuration is deployed on it. Systems providing administrative ingress in other environments may differ but the implementation procedure would be similar.

Implement a firewalld “ipset” for the cluster inter-node network that can be referenced in configurations.

Note that “firewall-cmd” command arguments are preceded with a double dash. WordPress converts the double dash to an en dash character. If these commands are being pasted into a shell please make the required syntax corrections.

~# firewall-cmd –new-ipset=clusterips –type=hash:net –permanent

~# firewall-cmd –reload

~# firewall-cmd –ipset=clusterips –set-description=”private cluster LAN”–permanent

~# firewall-cmd –ipset=clusterips –add-entry=192.168.0.0/24 –permanent

With firewalld configurations source configurations take precedence over interface configurations. Adding the new “clusterips” ipset as a source for the firewalld “trusted” zone will effectively whitelist those network addresses regardless of the interface the packets are received on.

~# firewall-cmd –zone=trusted –add-source=ipset:clusterips –permanent

~# firewall-cmd –reload

Useful firewalld features such as this will be covered in more detail in an upcoming blog.

Query firewalld for the configuration details.

~# firewall-cmd –get-ipsets

~# firewall-cmd –info-ipset=clusterips

~# firewall-cmd –ipset=clusterips –get-description –permanent

~# firewall-cmd –zone=trusted –list-all

or

~# firewall-cmd –zone=trusted –list-sources

The “trusted” zone should be “active” and have the “ipset:clusterips” listed as a source.

The pdsh default remote execution module used can be overridden using command line arguments or environment settings at runtime:

~# pdsh -R mrsh <COMMAND_LINE>

~# PDSH_RCMD_TYPE=mrsh pdsh <COMMAND_LINE>

or by setting persistent user environment defaults:

~/.bashrc

export PDSH_RCMD_TYPE=mrsh

Software packages

Although the MRSH remote command module (/usr/lib64/pdsh/mcmd.so) and munge library (libmunge.so) are installed with the pdsh package, the daemons that listen for MRSH connections are not.

Installation of the additional packages will provide the required socket listeners.

mrsh: Remote shell program that uses munge authentication

mrsh-server: Servers for remote access commands (mrsh, mrlogin, mrcp)

/usr/lib/systemd/system/mrlogind.socket

/usr/lib/systemd/system/mrshd.socket

~# zypper install mrsh mrsh-server

Deployment

Once the required packages are installed, assuming the munge key authentication configurations are in place, you can complete the PDSH configuration.

On administration system(s):

- Install the pdsh, mrsh, and mrshd packages.

- Enable and start the mrlogind and mrshd socket listeners.

~# systemctl enable mrlogind.socket && systemctl start mrlogind.socket

~# systemctl enable mrshd.socket && systemctl start mrshd.socket

On managed systems:

- Install the mrsh and mrshd packages.

- Enable and start the mrlogind and mrshd socket listeners.

~# systemctl enable mrlogind.socket && systemctl start mrlogind.socket

~# systemctl enable mrshd.socket && systemctl start mrshd.socket

- Modify the /etc/securetty if PDSH commands will be issued by user root:

tty1

tty2

tty3

tty4

tty5

tty6

mrsh

mrlogin

Again consider the balance of usability and security when adding services to this list.

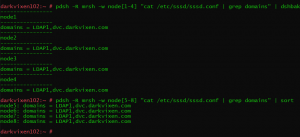

Taking the new configuration for a walk

Using the MRSH protocol to delegate the same work requires only a syntax change.

Figure 3: Syntax for using PDSH over MRSH

SLURM module example

PDSH can interact with compute nodes in SLURM clusters if the appropriate remote command module is installed and a munge key authentication configuration is in place. Managed systems can be grouped by SLURM partition or job assignment criteria.

SLURM clusters rely on munge key authentication so systems participating in these clusters are already prepared to use this module.

Implementing a munge key authentication configuration is beyond the scope of this document.

Software packages

PDSH is available on most Linux distributions but not always with SLURM support built in. Often administrators will have to build the installation rpm to include SLURM support. All that is required on SLE systems is to install the PDSH SLURM plugin to implement SLURM support.

Installation of the additional package will provide the PDSH SLURM plugin.

pdsh-slurm: Plugin for pdsh to determine nodes to run on by SLURM jobs or partitions.

/usr/lib64/pdsh/slurm.so

~# zypper install pdsh-slurm

Deployment

The configuration and deployment procedures in the previous MRSH module example are also requirements of the PDSH SLURM module. If these tasks have been completed simply installing the “pdsh-slurm” package is all that is required to use the SLURM aware pdsh application on SLE systems.

On administration system(s):

- Install the pdsh and and pdsh-slurm packages.

- Install the mrsh and mrshd packages and ensure the configuration for the previously detailed MRSH protocol example are in place.

- Verify the SLURM module has been installed.~# pdsh -L

On managed systems:

- Install the mrsh and mrshd packages and ensure the configuration for the previously detailed MRSH protocol example are in place.

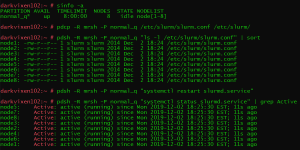

Taking the new configuration for a walk

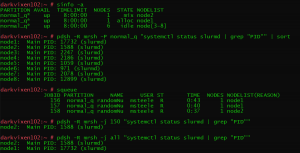

Using PDSH against a SLURM cluster.

The installation of the SLURM module adds the following pdsh command line arguments:

-j jobid: Run on nodes allocated to SLURM job(s) (“all” = all jobs)

-P partition: Run on nodes contained in SLURM partition

Figure 4: Syntax for using PDSH SLURM plugin

Note the ability to target compute nodes running specific jobs, or even all jobs. This feature could be useful where all jobs had to be suspended prior to a maintenance event.

The pdsh package contains a utility named “pdcp”, a parallel file copy tool, that also targets nodes using SLURM partition and job criteria. Consider a daemon config file update example.

Figure 5: Syntax for using PDSH SLURM plugin

Other examples might include the ability to easily modify the runtime environment or logging options for compute nodes running a particular job that is experiencing performance issues or crashing.

The compliment to the pdcp utility is the reverse-pdcp utility, or “rpdcp”. When the rpdcp command is used files are retrieved from remote hosts and stored locally. All directories or files retrieved will be stored with their remote hostname appended to the filename.

The /usr/bin/pdcp or /usr/bin/rpdcp utilities requires the installation of the pdsh package on managed systems.

Summary

The applications provided by the pdsh package(s) arguably have contemporaries that perform similar parallel tasks desirable to administrators. The administrator’s or even the organisation’s choice to use any of them may simply come down to feature sets, preference, or deployment pain. However most of these tools co-exist happily with others so there is no need for exclusion of any of them if particular features are desired. The PDSH stack on SLE is extensible as well. Additional features and remote command modules can be added by installing additional pdsh packages. Regardless of parallel command allegiances, cluster administrator or not, the PDSH stack should be in any Linux administrator’s tool kit.

Related Articles

Apr 02nd, 2025

SUSE Receives 48 Badges in the Spring G2 Report

Apr 21st, 2026

No comments yet