Simple Mount Structure for SAP Application Platform

Today I want to blog about the feature named Simple Mount Structure for SAP Application Platform. We like to add this feature to our product in the near future. It is a kind of great simplification of the SAP HA cluster.

Customers told us that the classical filesystem structure for a cluster controlling the central services of the SAP application platform tends to be complex and error prone. As SUSE is keen to improve the customer experience we decided to simplify the cluster setup and filesystem structure. The result is the simple mount structure for the SAP application platform. Instead of a number of filesystems needed per SAP system plus a number of filesystems per SAP instance the setup only needs a minimum. And the filesystems do not need to be switched-over and to be controlled by the pacemaker cluster. Typically systemd mounts these filesystems already during the system startup at boot time. The cluster controls the start and stop of the SAP start framework of each SAP instance and controls and monitors all integrated SAP instances as well.

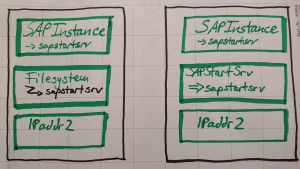

Instead of the filesystem resources needed for each SAP instance we have added a new resource type SAPStartSrv controlling the matching sapstartsrv framework process. The cluster configuration is really straight forward.

The architectural draft concept shows the old architecture with the filesystem stack (could be multiple resources) on the left. The right side shows the new simple mount concept. The architecture sketch also shows the difference how sapstartsrv is stopped by the cluster. While the former architecture did stop the SAP process by forcefully unmounting the filesystem the new stack gracefully stops the SAP start framework. The way of monitoring for a stopped SAP instance resource (also named probe) is adapted to be independent from a running sapstartsrv process.

Now it’s time to first look into the details of the drafted architecture. Lets start with the a cluster configuration for the SAPStartSrv resource and the resource group for the ASCS SAP instance as an example. Later we will show the look-and-feed of the new stack from point of system administrator tools.

Example Configuration of an ASCS SAP instance including a network load-balancer

Example configuration for SAP ASCS instance resource group in an ENSA2 setup. SAP system name is EVA, SAP service is ASCS, SAP instance number is 00, SAP virtual host name is sapeva. A load-balancer is used together with dedicated IP netmask configuration for specific public cloud environments. The SAPInstance resource definition includes a fencing priority. Set the crm property priority-fencing-delay to enable this feature. The ERS instance group and location constraint are part of the complete solution. One of our next blogs about towards-zero-downtime will explain that in detail.

primitive rsc_SAPStartSrv_EVA_ASCS00 ocf:suse:SAPStartSrv \ params InstanceName=EVA_ASCS00_sapevaas primitive rsc_SAPInstance_EVA_ASCS00 SAPInstance \ op monitor interval=11 timeout=60 on-fail=restart \ params InstanceName=EVA_ASCS00_sapevaas \ START_PROFILE=/sapmnt/EVA/profile/EVA_ASCS00_sapevaas \ AUTOMATIC_RECOVER=false MINIMAL_PROBE=true \ meta resource-stickiness=5000 priority=100 primitive rsc_IPAddr2_EVA_ASCS00 IPaddr2 \ params ip=192.168.178.188 cidr_netmask=32 primitive rsc_Loadbalancer_EVA_ASCS00 azure-lb \ params port=85085 group grp_EVA_ASCS00 \ rsc_Loadbalancer_EVA_ASCS00 rsc_IPAddr2_EVA_ASCS00 \ rsc_SAPStartSrv_EVA_ASCS00 rsc_SAPInstance_EVA_ASCS00 \ meta resource-stickiness=3000

Description of SAPStartSrv

SAPStartSrv is an resource agent for managing the sapstartsrv process for a single SAP instance as an HA resource.

One SAP instance is defined by having exactly one instance profile. The instance profiles can usually be found in the directory /sapmnt/$SID/profile/. In an Enqueue Replication setup one (ABAP-) Central Services instance ((A)SCS) and at least one Replicated Enqueue instance (ERS) are configured. An Linux HA cluster can control these instances by using the SAPInstance resource agent. That resource agent depends on the SAP sapstartsrv service for starting, stopping, monitoring and probing the instances. The sapstartsrv needs to read the respective instance profile. By default one system-wide sapstartsrv starts at Linux boot time to provide the needed service for all SAP instances.

For specific Enqueue Replication setups using instance specific sapstartsrv processes might be desireable. The cluster manages each SAP instance on its own as a pair of one SAPStartSrv and one SAPInstance resource agent. SAPStartSrv starts sapstartsrv for one specific SAP instance. By intention it does not monitor the service. SAPInstance then utilises that service for managing the SAP instance. SAPInstance also is doing in-line recovery of failed sapstartsrv processes. The instance’s virtual service IP address is used for contacting the sapstartsrv process. Thus that IP address resource (e.g. IPAddr2, aws-vpc-route53) needs to start before SAPStartSrv.

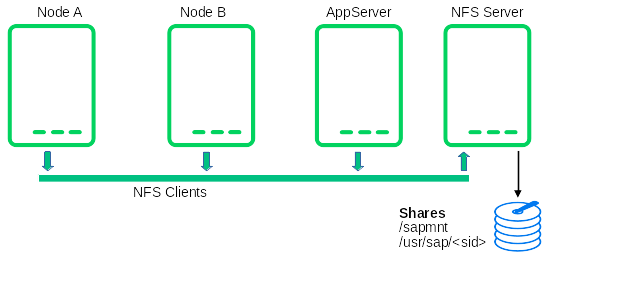

The described setup is benefitial particularly for public cloud and NFS-based environments. Both NFS shares with instance directories can be mounted statically on all nodes. The HA cluster does not need to control that filesystems. The /usr/sap/sapservices file resides locally on each cluster node.

What are sapping and sappong doing?

The new systemd services sapping and sappong are used to guarantee that during system boot the sapstartsrv processes are not already be started by the sapinit boot script. Together with our lighthouse customer we developed the boot sequence to hide and unhide the sapservices file to avoid unintended starts of those processes. This keeps also the log files in the workprocess of each SAP instance clean and valuable for the support.

Service sapping is running just before sapinit. It hides the sapservices file, so the SAP boot script just starts the SAP host agent but no SAP start framework. After sapinit service has finished, sappong unhides the sapservices file again. This guarantees that the file is completely available again after system boot. You also do not need to comment-out the cluster controlled SAP instances. This is less error prone, completely automated and allows customers still to work with SAP control tools like the SAP management console, the command line program sapcontrol or any other SAP start framework client program. The integration is still done via sap-suse-cluster-connector version 3.

Sapping and sappong are both services which only run for a short time period during the system boot. After they did their work they are inactive during the system operation.

How does it look and feel – systemd?

Two one-shot services are added around sapinit. The sapping services runs just before, sappong just after sapinit. The status “inactive (dead)” is normal for this kind of one-shot services. Both, sapping and sappong, are calling the same script sapservices-move.

* sapping.service - Hiding sapservices file from sapinit Loaded: loaded (/usr/lib/systemd/system/sapping.service; enabled; vendor preset: disabled) Active: inactive (dead) since Mon 2021-01-25 09:42:03 CET; 7h ago Docs: man:sapservices-move(8)

* sapinit.service - LSB: Start the sapstartsrv Loaded: loaded (/etc/init.d/sapinit; generated; vendor preset: disabled) Active: active (running) since Thu 2021-01-21 10:21:44 CET; 4 days ago Docs: man:systemd-sysv-generator(8) Tasks: 13 CGroup: /system.slice/sapinit.service ├─2563 /usr/sap/hostctrl/exe/saphostexec pf=/usr/sap/hostctrl/exe/host_profile ├─2691 /usr/sap/hostctrl/exe/sapstartsrv pf=/usr/sap/hostctrl/exe/host_profile -D └─2769 /usr/sap/hostctrl/exe/saposcol -l -w60 pf=/usr/sap/hostctrl/exe/host_profile

* sappong.service - Unhiding sapservices file from sapinit Loaded: loaded (/usr/lib/systemd/system/sappong.service; enabled; vendor preset: disabled) Active: inactive (dead) since Thu 2021-01-21 10:21:44 CET; 4 days ago Docs: man:sapservices-move(8)

How does it look and feel – filesystem?

All filesystems are mounted by the OS on all nodes, no filesystem is controlled by the HA cluster. A simple way is to add the filesystems to /etc/fstab. Another option might be the auto-mounter facility. At least /sapmnt/<SID>/ and /usr/sap/<SID>/ are part of a typical installation. The example focuses on these entries. Usually a transport directory like /usr/sap/trans/ is mounted as well in production environments. It has been left off here for simplicity. Mount options are depending on the customer environment.

#/etc/fstab ... 192.168.1.1:/export/EVA/sapmntEVA /sapmnt/EVA nfs vers=3.0,defaults 0 0 192.168.1.1:/export/EVA/usrsapEVA /usr/sap/EVA nfs vers=3.0,defaults 0 0 #

How does it look and feel – process list?

Then sapstartsrv processes are separated into independent groups. One process group still is created by sapinit at boot. It contains the saphostagent processes only. It runs on all cluster nodes. Two more groups are created by the SAPStartSrv HA resource agent. One for the ASCS instance, another one for the ERS instance. Those instance-specific groups are started only on the node were the respective SAP instance is started by the cluster.

- saphostagent processes, started at boot by sapinit service

root 2563 ... Jan21 0:07 /usr/sap/hostctrl/exe/saphostexec pf=/usr/sap/hostctrl/exe/host_profile sapadm 2657 ... Jan21 0:00 /usr/lib/systemd/systemd --user sapadm 2691 ... Jan21 0:38 /usr/sap/hostctrl/exe/sapstartsrv pf=/usr/sap/hostctrl/exe/host_profile -D root 2769 ... Jan21 2:28 /usr/sap/hostctrl/exe/saposcol -l -w60 pf=/usr/sap/hostctrl/exe/host_profile

- instance ASCS00 specific sapstartsrv processes, started by SAPStartSrv

evaadm 10887 ... Jan25 0:20 /usr/sap/EVA/ASCS00/exe/sapstartsrv pf=/sapmnt/EVA/profile/EVA_ASCS00_sapevaas -D -u evaadm evaadm 11127 ... Jan25 0:00 sapstart pf=/sapmnt/EVA/profile/EVA_ASCS00_sapevaas evaadm 11138 ... Jan25 0:02 ms.sapEVA_ASCS00 pf=/usr/sap/EVA/SYS/profile/EVA_ASCS00_sapevaas evaadm 11139 ... Jan25 0:29 enq.sapEVA_ASCS00 pf=/usr/sap/EVA/SYS/profile/EVA_ASCS00_sapevaas

- instance ERS10 specific sapstartsrv processes, started by SAPStartSrv

evaadm 13947 ... Jan25 0:27 /usr/sap/EVA/ERS10/exe/sapstartsrv pf=/sapmnt/EVA/profile/EVA_ERS10_sapevaer -D -u evaadm evaadm 14207 ... Jan25 0:00 sapstart pf=/sapmnt/EVA/profile/EVA_ERS10_sapevaer evaadm 14216 ... Jan25 0:42 enqr.sapEVA_ERS10 pf=/usr/sap/EVA/SYS/profile/EVA_ERS10_sapevaer NR=$(SCSID)

How does it look and feel – sapcontrol?

The sap-suse-cluster-connector HA interface is not affected by SAPStartSrv. Everything works as usual.

In fact we expect SAPStartSrv to be used in future HA certifications.

25.01.2021 17:50:30 GetSystemInstanceList OK hostname, instanceNr, httpPort, httpsPort, startPriority, features, dispstatus ensanfs1, 0, 50013, 50014, 1, MESSAGESERVER|ENQUE, GREEN ensanfs2, 10, 51013, 51014, 0.5, ENQREP, GREEN 25.01.2021 17:51:01 GetProcessList OK name, description, dispstatus, textstatus, starttime, elapsedtime, pid msg_server, MessageServer, GREEN, Running, 2021 01 25 09:42:27, 8:08:34, 11138 25.01.2021 17:53:30 HACheckFailoverConfig OK state, category, description, comment SUCCESS, SAP CONFIGURATION, SAPInstance RA sufficient version, SAPInstance includes isers patch

How does it look and feel – crm_mon?

The overall concept is similar to the traditional filesystem-based concept for ENSA1 and ENSA2 HA clusters. The significant difference is, that Filesystem resources and related LVM2 and Raid1 resources have been removed. SAPStartSrv resources are used instead. SAPStartSrv is included into the resource group together with the vIP and the SAPInstance. SAPStartSrv needs start before SAPInstance and needs to stop after SAPInstance. The vIP is needed before SAPStartSrv and stop afterwards. All straight forward. The example shows two nodes. Of course three nodes for ENSA2 are possible as well. Some public cloud environments need specific RAs for handling the vIP. Those RAs are members of the resource groups.

Stack: corosync Current DC: ensanfs1 (version 1.1.18+20180430.b12c320f53.27.1b12c320f5) partition with quorum Last updated: Mon Jan 25 09:53:49 2021 Last change: Mon Jan 25 09:42:54 2021 by hacluster via crmd on ensanfs2 2 nodes configured 7 resources configured Online: [ ensanfs1 ensanfs2 ] Full list of resources: rsc_stonith_sbd (stonith:external/sbd): Started ensanfs1 Resource Group: grp_EVA_ASCS00 rsc_IPAddr2_EVA_ASC00 (ocf::heartbeat:IPaddr2): Started ensanfs1 rsc_SAPStartSrv_EVA_ASCS00 (ocf::suse:SAPStartSrv): Started ensanfs1 rsc_SAPInstance_EVA_ASCS00 (ocf::heartbeat:SAPInstance): Started ensanfs1 Resource Group: grp_EVA_ERS10 rsc_IPAddr2_EVA_ERS10 (ocf::heartbeat:IPaddr2): Started ensanfs2 rsc_SAPStartSrv_EVA_ERS10 (ocf::suse:SAPStartSrv): Started ensanfs2 rsc_SAPInstance_EVA_ERS10 (ocf::heartbeat:SAPInstance): Started ensanfs2

SAPStartSrv is an open source project

The github project SUSE/SAPStartSrv-resourceAgent is to implement the resource-agent for the instance specific SAP start framework. It controls the instance specific sapstartsrv process which provides the API to start, stop and check an SAP instance. SAPStartSrv does only start, stop and probe for the server process. By intention it does not monitor the service. SAPInstance is doing in-line recovery of failed sapstartsrv processes instead. SAPStartSrv is to be included into a resource group together with the vIP and the SAPInstance. It needs to be started before SAPInstance is starting and needs to be stopped after SAPInstance has been stopped. SAPStartSrv could be used since SAP NetWeaver 7.40 or SAP S/4HANA (ABAP Platform >= 1909). sapping and sappong – agents to hide/unhide the /usr/sap/sapservices files during system boot to avoid that sapstartsrv is started for an SAP Instance. sapping is running before sapinit and sappong is running after sappong.

The project could be accessed at https://github.com/SUSE/SAPStartSrv-resourceAgent. Currently SUSE is in the phase to add the SAPStartSrv resource-agent to the SUSE Linux Enterprise for SAP Application product. It will still take some time till the package will be available in the public repository. Productizing needs also to include quality assurance, documentation and a lot of steps after doing the architecture and implementation.

What are the next steps?

Currently we are working to integrate the new resource agent SAPStartSrv into SLES for SAP Applications. We will publish a next blog about that once the package is available.

This is Fabian Herschel from the SUSE SAP LinuxLab. Thanks to Lars Pinne for contributing the look-and-feel examples.

Links

https://www.suse.com/c/suse-ha-sap-certified/

https://www.suse.com/support/kb/doc/?id=000019293

https://www.suse.com/support/kb/doc/?id=000019244

https://www.suse.com/support/kb/doc/?id=000019944

https://www.suse.com/support/kb/doc/?id=000019924

Comments

HI Mr.Fabian Herschel

Hope you are doing great!

I have some questions about Simple Mount Structure for SAP Application Platform.

1. If Simple Mount Structure release, which version will you add or apply?

2. When Simple Mount Structure added or applied, is this feature optional or required?

Best Regards,

Jang

1. Please have a look at our reslease notes for SLES for SAP Applications 15 SP3.

You may also want watch our SUSECon digital 2021 session BP-1046 and also have a look at TUT-1010.

2. The feature is already published and optional, Its recommended, because it’s a great improvement.

Best

Fabian Herschel