Meltdownのライブパッチ – SUSEエンジニアの研究プロジェクト(パート3)

パート1 (Meltdownのライブパッチの主な技術的障害)とパート2 (仮想アドレスマッピングとMeltdown脆弱性)に引き続いて、TLBフラッシュプリミティブに対して必要となる変更について説明します。

仮想アドレスを物理アドレスに変換するために、CPUはページテーブルツリーを走査する必要があります。しかし、メモリアクセスやアドレス解決のたびに走査を行っていると時間がかかるので、CPUはそれらの変換結果を特別なキャッシュであるアドレス変換バッファー(TLB)に格納します。ページテーブルに変更を加えたときに、古くなったTLBエントリをフラッシュするようにCPUに命令するのは、カーネルの役割です。

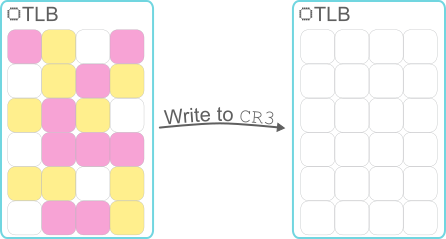

CR3への書き込みでTLBをフラッシュする

特別なCR3レジスタに、新しいページテーブルのルートを参照するポインターを書き込むことで、TLBが完全に無効になります。たとえば、コンテキストスイッチの際に、カーネルがプロセスのスケジュールに従って、関連付けられたメモリマップのページテーブルをCR3にインストールすると、以前に実行されたプロセスでキャッシュされたすべての変換内容が暗黙的に無効化されます。

しかし、不要なTLBフラッシュはパフォーマンスに悪影響を与えることに注意する必要があります。TLBフラッシュ自体に時間がかかるだけでなく、これまでのページテーブルの走査によって得られた貴重な情報も失われます。

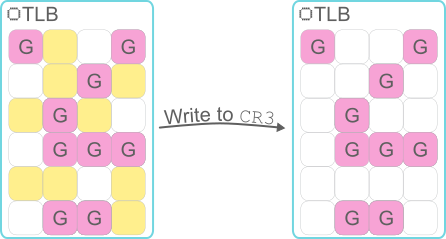

CR3への書き込み後も、グローバルページのTLBエントリが存続する

ここで、カーネルスペース領域(すべての仮想アドレススペースの上位の範囲)に関するマッピングは、すべてのプロセスのページテーブルの間で常に同期された状態に保たれることを思い出してください。コンテキストスイッチの実行時にCR3に書き込まれた後で、それらの領域からキャッシュされた変換内容が、何らかの方法で存続すれば問題はありません。実際、x86_64 CPUには、「グローバルページ」という機能が搭載されています。この機能を有効にすると、ページテーブルの特定のマッピングをグローバルであるとマークすることができます。これらのタグ付きグローバル変換は、CR3への書き込みに起因するフラッシュの影響を受けません。KPTIが登場するまで、Linuxカーネルは、カーネルスペース領域にあるすべての変換に「グローバル」のマークを付けることで、コンテキストスイッチの実行時にそれらの変換内容が不必要に無効化されるのを防いでいました。

しかし、この動作は、カーネルアドレススペースのマッピングをユーザースペースから隠すという、KPTIの重要な目的を阻害することになります。したがって、Meltdownのライブパッチでは、CPUの「グローバルページ」機能をオフにする必要があります。理論上、この処理は簡単に行えます。一般にCR4.PGEと呼ばれる、特別なCR4レジスタ内にある特定のビット位置に、0を書き込むだけで済みます。ただし、この処理には1つだけ問題があります。LinuxカーネルのTLBフラッシュプリミティブでは、CR4.PGEを1から0にフリップすると、グローバルエントリを含むすべてのTLBエントリが無効化されるという、アーキテクチャで定義された効果が利用されています。CR4.PGEは常に1になっているという前提に基づくこの実装では、単にこのビットを0にしてから、再度1にします。したがって、ライブパッチでグローバルページを無効にすると、この前提が覆され、実行中のカーネルのTLB無効化メカニズムが解除されます。

幸い、前述のとおり、グローバルなkGraftパッチ状態を参照できるので、この問題は簡単に解決できます。kGraftでTLBフラッシュプリミティブにパッチを適用して、CR4.PGEのいずれの設定とも互換性があるようにし、ライブパッチの遷移がグローバルに完了するのを待ってから、CR4.PGEを0にするのです。

グローバルページは、パフォーマンスオーバーヘッドの緩和を目的としているので、グローバルページを無効にすれば、当然パフォーマンスオーバーヘッドが増えることになります。しかし、KPTIは、事態をさらに悪化させる可能性があります。カーネルに移行した時点で、軽量化されたユーザースペースのシャドーページテーブルは、完全な情報を含むページテーブルに置換される必要があります。同様に、ユーザースペースに戻る際、シャドーコピーを再度復元する必要があります。これは、パフォーマンスを損なうTLBの無効化を常に伴う、CR3への追加の書き込みが大量に発生することを意味します。ある程度以上新しいIntel CPUは、「プロセスコンテキストID」(PCID)という新機能を備えており、CR3への書き込みによるTLBの無効化をより選択的に実行できます。KPTIを使用できるカーネルでは、この機能を利用してカーネルスペースに移行する際に、すべてのフラッシュを回避でき、ユーザースペースに戻る際にも多くのフラッシュを回避できます。PCIDのセマンティクスは、ライブパッチの対象となるカーネルで無効になっていても支障はなく、操作時に簡単に有効にできます。実際の使用では、kGraftによるパッチが適用されたTLBフラッシュプリミティブを実装する必要がありますが、この問題はすでに解決しました。

続きは「パート4」をお読みください。最後のパートでは、この非常に興味深いプロジェクトについて結論を述べます。

Related Articles

2月 12th, 2025

Multi Linux Supportがモダナイゼーションを促進する理由

6月 21st, 2024

CentOSのEOL(エンド・オブ・ライフ)に向けた準備:手順と戦略

8月 19th, 2024

No comments yet