Unlock AI Efficiency with NVIDIA MIG vGPU Multitenancy on Kubernetes with SUSE Virtualization

What is NVIDIA MIG

NVIDIA Multi-Instance GPU (MIG) technology allows a single physical GPU to be partitioned into multiple isolated instances, enabling several workloads to run concurrently with dedicated compute and memory resources. MIG improves GPU utilisation and supports secure multi-tenant AI workloads.

GPU capacity is one of the most expensive and constrained resources in modern AI infrastructure. Training, inference, analytics, and MLOps pipelines often compete for the same GPU hardware while workloads can use a fraction of a full GPU, leaving costly capacity idle.

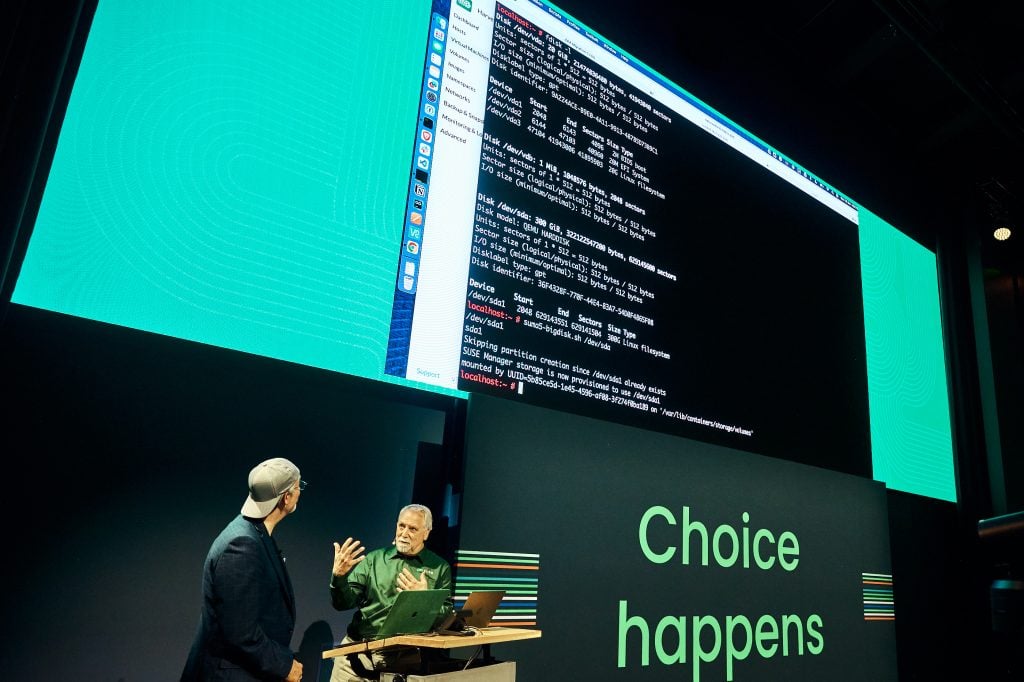

At KubeCon EU 2026, SUSE Virtualization introduces native support for NVIDIA Multi‑Instance GPU (MIG) vGPU partitioning. Harvester automatically detects MIG capable GPUs and exposes isolated GPU instances to virtual machines. Meaning multiple VMs can share a single physical GPU as if they were completely separate GPUs while maintaining dedicated compute, memory bandwidth, and fault isolation.

This allows organizations to run AI workloads and traditional virtual machines on the same open platform with complete GPU multitenancy.

Most AI workloads don’t need an entire GPU

Inference services often use only a small percentage of available compute but must run continuously. Training workloads consume significant compute but run intermittently. Data pipelines may require memory bandwidth but minimal processing power. Video analytics workloads need steady throughput but moderate model complexity.

The traditional model of one workload per GPU is simple but inefficient. A workload using 15 percent of an A100 still reserves the entire device. Across a large AI platform this leaves significant GPU capacity unused.

Software level GPU sharing methods can help but introduce operational risk. Time sharing approaches such as CUDA MPS do not guarantee isolation. A failure in one workload can impact others sharing the same GPU.

For teams running both VMs and AI workloads the result is often two separate infrastructure stacks. AI workloads run on specialized GPU clusters while the rest of the environment remains virtualized. This creates additional operational complexity, tooling, and cost.

Hardware level GPU partitioning with MIG

NVIDIA Multi-Instance GPU partitioning is hardware-level spatial partitioning of the GPU die itself. Unlike time-sharing, MIG creates independent, isolated instances with their own dedicated compute engines, memory, and cache — on the chip. A fault in one MIG instance cannot affect another. Performance is deterministic and predictable. From the workload’s perspective, each MIG instance looks and behaves like a dedicated GPU.

In SUSE Virtualization 1.7, Harvester extends MIG support to virtual machines. The platform automatically detects MIG-capable GPUs in your cluster and enables dynamic partitioning via the Kubernetes device plugin ecosystem. When a VM is deployed with a vGPU request, SUSE Virtualization allocates a MIG instance to that VM completely isolated at the hardware level, accessible as a standard GPU device within the guest.

From idle GPUs to AI ready infrastructure

NVIDIA MIG vGPU fundamentally changes how organizations use expensive GPU hardware. Instead of dedicating an entire GPU to a single workload, multiple AI tasks can run simultaneously on isolated MIG slices. Training, inference, analytics, and data pipelines can share the same physical GPU while maintaining predictable performance and strict hardware level isolation. The result is dramatically higher GPU utilization and far more useful work produced from the same infrastructure investment.

This also unlocks true multi tenant AI infrastructure. Different teams or projects can safely share GPU nodes without risking interference between workloads. Platform teams can offer GPU resources as a shared service while maintaining the isolation guarantees required by modern AI development.

Equally important, AI workloads and traditional virtual machines no longer need separate infrastructure stacks. With SUSE Virtualization, both can run on the same Kubernetes based platform. Operations teams manage a single environment, reducing operational complexity and eliminating the need for parallel AI and virtualization platforms.

Because MIG vGPU support is implemented through KubeVirt and the Kubernetes device plugin ecosystem, it fits naturally into existing Kubernetes operations. Organizations gain GPU acceleration for virtual machines without introducing proprietary hypervisor extensions or new management layers.

Part of a broader AI-ready infrastructure story

MIG vGPU is one piece of SUSE Virtualization’s broader commitment to AI-ready infrastructure. The platform running on open-source KubeVirt and Longhorn storage means that your GPU nodes benefit from the same distributed storage, live migration, and lifecycle management capabilities as the rest of your cluster. AI workloads are first-class Kubernetes workloads with the full enterprise operations story behind them.

Your AI infrastructure benefits from other SUSE Virtualization announcements including VM Auto Balance for resource optimization, upgrade controls for zero-disruption maintenance, and the observability integrations your platform team needs to monitor complex multi-tenant GPU workloads.

For teams migrating away from VMware, this matters specifically because VMware’s vSphere + NVIDIA AI Enterprise stack has been one of the more defensible reasons to stay. SUSE Virtualization 1.7 removes that argument. You can match the VM-based GPU access model of large proprietary vendors with open-source tooling and no VMware licensing overhead.

Available now, GA in SUSE Virtualization 1.7

MIG vGPU support is Generally Available in SUSE Virtualization 1.7, released in January 2026. If you’re already running 1.7 on MIG-capable hardware, you can enable this today. Review the vGPU documentation for prerequisites, configuration steps, and supported MIG profiles.

If you’re evaluating SUSE Virtualization for an AI-heavy use case, the Harvester GitHub repository is the best place to track development, file issues, and engage with the community driving this capability forward.

Supported hardware

MIG vGPU support in SUSE Virtualization 1.7 targets NVIDIA data center GPUs with MIG capability: the A100, H100, and H200 families. These are the GPUs that power the vast majority of serious AI infrastructure deployments today and the GPUs where multi-tenancy delivers the most value given their price points.

For detailed configuration guidance on enabling MIG vGPU in your cluster, see the SUSE Virtualization vGPU documentation.

Put every GPU to work

AI infrastructure costs are only going up. The teams that win are the ones that extract maximum value from every GPU they own. MIG vGPU in SUSE Virtualization 1.7 gives you the hardware-level isolation to do that safely, at scale, across VMs and containerized workloads on a single open-source platform.

once and use it fully.

See these capabilities in action at KubeCon EU 2026 in Amsterdam, or connect with a SUSE expert to explore what’s possible for your organization.

Ready to get started?

Visit the SUSE Virtualization product page to explore the full platform. Dive into the vGPU configuration documentation to plan your deployment. If you’re at KubeCon EU 2026, find the SUSE team to see a live demonstration of MIG vGPU in action across a multi-tenant AI cluster. And if you want to contribute or follow the technical development, join the conversation on the Harvester GitHub.

Every idle GPU cycle is money you’ve already spent. SUSE Virtualization 1.7 helps you spend it

SUSE Virtualization is the enterprise distribution of Harvester, the open-source HCI project built on Kubernetes. Track upcoming AI infrastructure features on the Harvester roadmap.

Come see us at KubeCon EU in Amsterdam. Visit the SUSE booth, join our sessions, and experience firsthand what an AI-native cloud native platform can do for your organization.

For the latest updates, visit suse.com/kubecon and follow us on social media throughout the week.

Related Articles

Nov 24th, 2025

Enterprise Edge Computing: Making Business Possible Anywhere

Apr 30th, 2026