Technical Deep-Dive of Container Runtimes

As you might have already seen, SUSE CaaS Platform will soon support CRI-O as a container runtime. In this blog, I will dig into what a container runtime is and how CRI-o differentiates architecturally from Docker. I’ll also dig into how the Container Runtime Interface (CRI) and the two Open Container Initiative (OCI) specs are used to promote stability in the container ecosystem.

There has already been a good blog written about the business choice to move to CRI-O as the default by the Kubic team. (TLDR: CRI-O is fully open source, which allows SUSE to give a better level of support and service than from Docker) Those are fantastic reasons, but I want to dig into the technological reasons for a move. In this blog, I’m going to assume a basic understanding of containerization and Kubernetes.

So, What is a Container Runtime, anyways?

As I was researching this topic, it became very clear to me that there is a lot of overloading of the term “Container Runtime.” From what I can tell, it seems to mean anything from the entire system used to run containers (such as Docker), to the container runtime shim that translates state changes from Kubernetes to another interface, to the component that actually spawns the container processes. For clarity in this blog to ease some of this confusion, I will use the term “container engine” to describe the component that pulls together the sandbox and “container runtime” the component that runs the container itself.

Abstractly, the container engine is the part of a Kubernetes system that is responsible for running containerized workloads. The kubelet communicates information about what containers should be running to this engine along with all the meta information about how the container’s environment should be administered. Where some (possibly a lot of) confusion creeps in is that some engines and runtimes (like Docker) include a ton of other features that are not needed by Kubernetes to run containers.

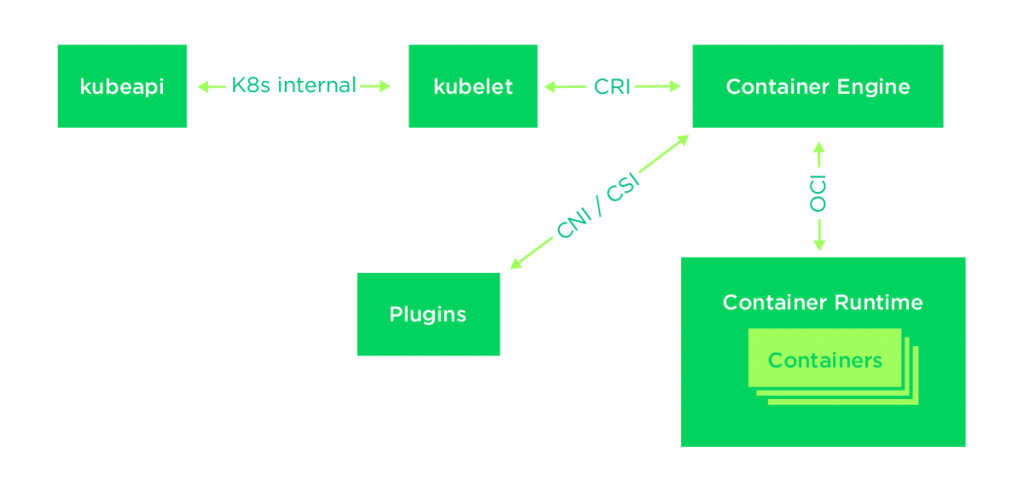

For help visualizing all the moving parts, here is a generalized architecture diagram.

The core responsibility of the container engine is to provide a way for an orchestrator (in our case, Kubernetes) to control and monitor the containers that are currently running.

It handles:

- Container Lifecycle Management

- Loading and verifying container images

- System resource monitoring

- Allocation and Isolating resources

To complete these activities, the engine pulls together the resources needed to run a container. It uses standardized interfaces to coordinate these resources (such as the CNI, CSI, OCI, etc).

Container Runtime Interface

The Container Runtime Interface (CRI) API specification was created by the SIG-Node special interest group. The API lets Kubernetes invert the control of how images are being managed and run to a pluggable, external engine. This inversion of control allows for better system testing by the project contributors and easier component development by people building new container runtimes.

The original announcement does an amazing job of highlighting the need for and goals of the new spec. The main driver at the time was the emergence of rkt (from CoreOS) as a competitor to Docker. At the time, Docker was very deeply embedded into Kubernetes and using rkt meant recompiling Kubernetes with substantial differences.

The CRI interface is built on gRPC and Protobuf over a Unix socket. The spec can be found on github.

Open Container Initiative

On the other side of the container engine is the container runtime controlled through the Open Container Initiative specs. There are two specifications (linked below) produced by the OCI: OCI-runtime and OCI-image. These specs work together to define how to start containers through the container runtime.

The OCI runtime spec defines how to interact with a container runtime to control the lifecycle of a container. While it might seem redundant (given that CRI seems to define the same thing), it adds another layer of contract definition to the architecture. As with CRI, this layer of contract gives some guarantees to the system regarding how the developer’s code will run.

The container engine provides the runtime with a filesystem bundle (conforming to the OCI-image spec) to run. Within this filesystem bundle are all the files needed in the runtime and a configuration specifying what to run in the container (also known as its entrypoint).

For some extremely dense reading, the specs can be found here and here.

Open Source Docker

Open source Docker is a fully-featured container system. The first system to really gain any traction, Docker became the dominant technology for building and deploying what we now know as containers. Due to this, it has been the default runtime of Kubernetes. It also has its own command line interface, image spec, and an image building service.

Docker runs as a daemon on each node and offers a restful API that clients use for controlling container state. Its command line client works through this rest API to facilitate building, maintaining, and using container images. It also includes a local image repository to store its own containers, containerd to manage container lifecycle, and then runC to spawning the container process.

Since it doesn’t implement the CRI spec internally, Docker does provide a shim to work with Kubernetes. This shim does the translation from the CRI commands to Docker’s RESTful API.

CRI-O

CRI-O is a lightweight implementation of the CRI spec that can be used in place of open source Docker. Due to the minimal nature of the codebase, its maintainers can more effectively keep up with the needs of the larger Kubernetes community. As mentioned above, the project is fully open source with a substantial number of companies helping build it. (As an aside, this means that SUSE support staff can directly fix any issues found)

The reason I hesitate to call it a container runtime is that in implementing the CRI spec, CRI-O uses other runtimes to do the heavy lifting. For communication with the container runtime, it uses the Open Container Initiative runtime spec. CRI-O acts as the intermediary between Kubernetes on the CRI interface and each of the other moving parts on the node (such as networking, storage, and the container runtime)

By default, it uses runC as the container runtime using the OCI runtime-spec. Due to its use of this interface, cluster administrators can set up different container runtimes to allow for different levels of isolation or hardware support. (For example, high performance computing environments looking for better device support could use Singularity or those looking for better isolation could use Kata Containers) to give more control over container environment.

Benefits

With the adoption of these standards, Kubernetes (and its surrounding ecosystem) gains a lot of stability. Users can now trust that none of the components break when surrounding ones change their implementation. It also allows for a more diverse ecosystem and competition while maintaining conformance.

While the Kubic project mentioned above is a great project, a lot of companies want (or need) a greater level of support and services. With cloud native development (especially during the transition period) there are a lot of moving and competing parts. SUSE CaaS Platform has been curated to alleviate the frustration of needing to understand each of the hundreds of options possible just to get a system up and running. Being the only truly open, open source company allows for better support in the ever-growing container ecosystem as we can give support all the way up and down the stack.

Related Articles

Feb 27th, 2023

SUSE, Dell Technologies and Intel FlexRAN

Jun 06th, 2022

Understanding the new drivers of transformation

Jan 19th, 2024

No comments yet