How You Can Make Ceph Better

Well, here we are, SUSE Enterprise Storage 6 is out the door and seeing customer deployments. Product management is happy, the engineers are relieved, and our customers continue to move forward with more advanced features, reliability, and scalability than ever before. With this successful launch behind us, it is time to ask, “How can we continue to make Ceph even better?”

Quite frankly, the answer is feedback. For this, we have the normal channels of working with account teams, customers, partners, and community forums and these are all great. BUT… There is something else that can be done to provide the empirical data that the engineers crave. It’s called the Ceph Telemetry Module.

Quite frankly, the answer is feedback. For this, we have the normal channels of working with account teams, customers, partners, and community forums and these are all great. BUT… There is something else that can be done to provide the empirical data that the engineers crave. It’s called the Ceph Telemetry Module.

Basically, this module is the starting point for a functionality that every major storage system user is familiar with, a regular call to the mothership with some statistical data. Though, in this case, the mothership isn’t a single vendor, it is the true mothership, the Open Source community behind Ceph and the Ceph Foundation. This fully supports the idea of no vendor lock-in by ensuring we all have access to the same aggregated data.

Let’s start with why providing the information is important. A recent blog post by my colleague, Lars Marowsky-Brée, SUSE Distinguished Engineer, on Ceph.io clearly illustrates the value by identifying a potential issue that affects data distribution by having improper pg counts on existing clusters. Though only a small number of systems are reporting, it is enough to indicate a potential problem with a fair number of systems. Thankfully, this concern is fairly simple to remedy and is likely to be less of a problem given the recent introduction of pg_autoscaling, but it does provide enough information for action to be taken to advise end users of the potential situation.

Now, privacy of the data is always a concern when pushing stuff like this out the door. With this in mind, it is simple to see the data that is being pushed to the community Ceph project, where it is help privately for analysis. To view the data that would be pushed, simply log onto a node with administrative rights on your cluster and issue the commands:

ceph mgr module enable telemetry ceph telemetry show

and all will be revealed.

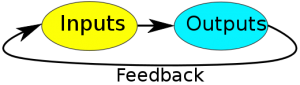

Enabling this feature helps by providing a substantial amount of data to the Ceph project. This better equips the large community of developers with the ability to understand how clusters appear in the real world, how they perform, and how they are carved up. This is, essentially, an automated feedback loop for the developers that helps quantify thinks like how is adoption of a certain release tracking or helps point out things that need to be better explained or validated during configuration to prevent undesirable outcomes.

If you rant the commands to show what is in the data, you can see that from a privacy perspective, these metrics include no PII details, nor any local identifiers or user data from your environment. It’s limited to configuration choices and version numbers. Over time, the data will be enhanced to include performance information and other counters that are valuable in helping the community understand how to best enhance Ceph, while still protecting your privacy.

If your organization is willing to share data, and I hope it is, please enable the Ceph Telemetry Module by following the easy steps outlined on the Ceph.com page. Just to make it easier, the key command is:

ceph telemetry on

It does require that the mgr nodes have the ability to push the data over HTTPS to the upstream servers, so make sure that your corporate firewalls will permit this push.

If you have any questions or concerns about the data, please reach out to your SUSE contact so that we can address the concerns and make sure that Ceph can benefit from your system’s feedback.

Now, just to confirm, this functionality IS present in SUSE Enterprise Storage 6. So, join the community by enabling this feature and help make Ceph an even better storage platform for your business.

Related Articles

Jun 14th, 2023

At your service: SUSE documentation team @SUSECON 2023

Feb 15th, 2023

Stop the Churn with SUSE eLearning

Jul 14th, 2022

No comments yet